Java.lang.ClassNotFoundException: Class org.apache.hadoop.fs.azurebfs.AzureBlobFileSystem not found. Ask Question 0. Class org.apache.hadoop.fs.azurebfs.AzureBlobFileSystem not found; I see this property is is set in the core-site.xml file on my HDInsight cluster. Where do I get this jar so I can install it on my docker as well? Stack Exchange network consists of 175 Q&A communities including Stack Overflow, the largest, most trusted online community for developers to learn, share their knowledge, and build their careers. Visit Stack Exchange.

I'm using IntelliJ ide and language scala, I want to access a text file stored in AWS S3, using IAM user credentials. I have not downloaded Hadoop on my system just using the dependencies. I have done this using the Aws dependencies and jets3t dependencies. But I want to do it with spark.

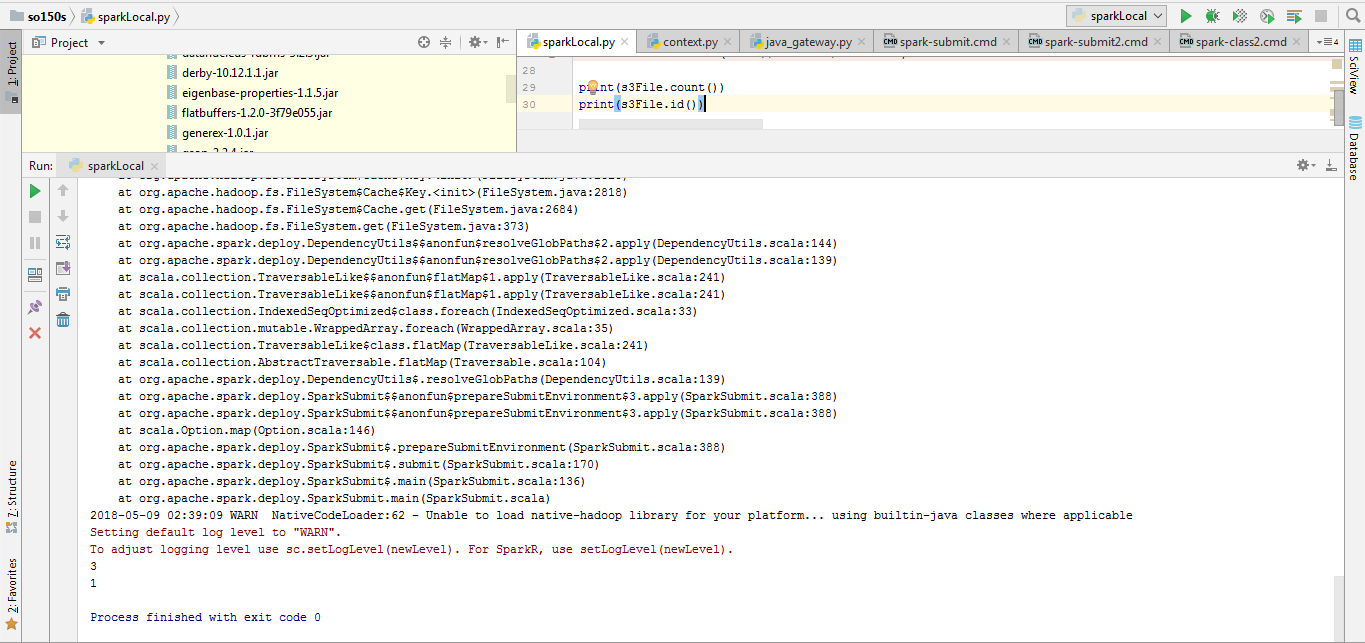

Basic errors I'm getting is:

Please help me how should I solve this problem.

I have tried adding various Hadoop dependencies, hadoop-aws dependencies independently and each gave me a different error.Like adding 'org.apache.hadoop' % 'hadoop-aws' % '3.2.0' says:

adding 'org.apache.hadoop' % 'hadoop-common' % '3.1.1' says:

adding 'org.apache.hadoop' % 'hadoop-aws' % '2.7.3' says:

Code:

Actual scala file code

I expected the contents of the file would be accessed and print some content as per it. But I'm getting the errors.

and as mentioned above with each dependency it shows a different error.

I got one solution online where it says to sets the Hadoop path:as:Export hadoop_path = some path.

but as I have not installed Hadoop I can 't provide any path where it is installed.

Prayas Pagade

Prayas PagadePrayas Pagade

1 Answer

I resolved this by adding the below dependencies from Maven:

Use the same version of hadoop-aws, hadoop-common and hadoop-map-reduce dependencies.

As the inbuilt jackson jars were not supported I had to override them for new version instead of just adding them.

Prayas PagadePrayas Pagade